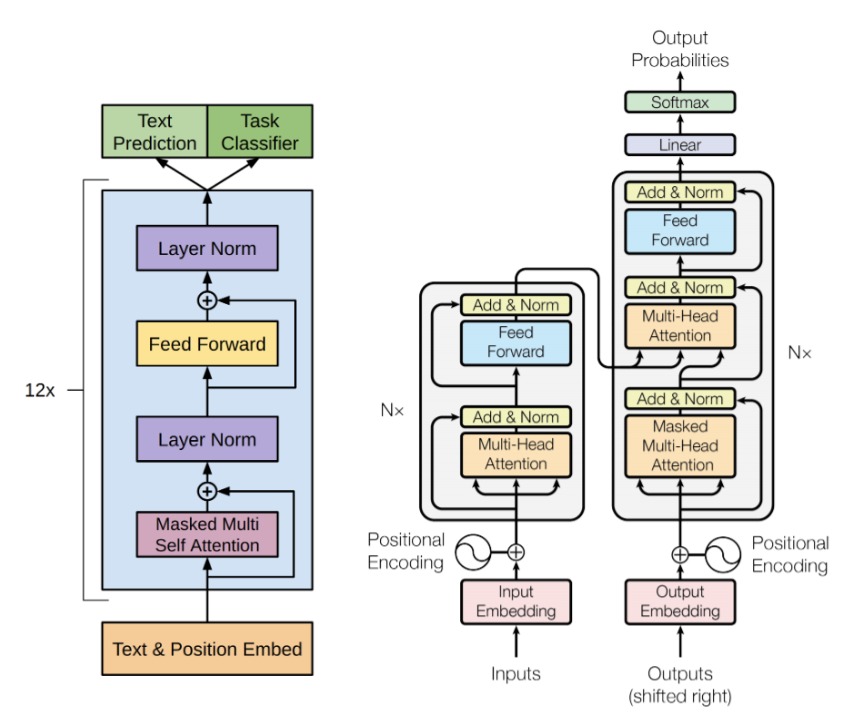

Image from `Attention is all you need’, Vaswani et al, arXiv:1706.03762.

This is a question for the coding community, programmers and coders: “Would you classify the behaviour of a Large Language Model (LLM) as reductionist or (strongly) emergent?”

In conversations with Janet Ginsburg (I also brought it up with Tim Palmer and with Mark Neyrinck) Janet mentioned the expression `the human algorithm’ and how it might come to be a new entry in the dictionary.

I have been toying with the following question:

“Whatever we call the phenomena currently occurring in LLMs, from an algorithmic point of view, are these phenomena reductionist or are they (strongly) emergent?”

Reductionism is a methodology for studying a system (scientifically speaking). This methodology is based on the premise that the behaviour of the system can be understood by breaking it down into simpler constituents and focusing instead on those simpler constituents. By understanding the simpler constituents we can come to understand the whole. (This is a very rough description of reductionism, as you will find though a google search.)

Strongly emergent phenomena, on the other hand, are the opposite. Strong emergence can be captured by the expression:

“The whole is greater than the sum of its parts.”

This means that in systems with a very large number of elements, such that the system can be described as a complex system, the phenomena exhibited by the system can only be understood by studying the whole system at once. This implies that the reductionist methodology of study, by breaking down into simpler constituents, will in such cases not explain the phenomena exhibited by the system.

In the view of some physicists, myself included, progress in physics today may depend on whether we can identify where the reductionism assumption breaks down. (Topics that may be affected include quantum gravity, quantum computing, black hole firewall paradox, and on and on.) However, most importantly:

“What is the difference between living and non living systems?“

This is what we try to describe using first principles in biocosmology, the science that I founded (with Stu Kauffman, Andrew Liddle, and Lee Smolin).

The development of LLMs seems to have entered this discussion in the meantime. What actually is a LLM, and is there any property of it that we suspect behaves a little like a living system?

On that, I recently hosted a live debate at the Institute of Arts and Ideas on April 3rd:

“Will we ever know what it is to be alive?“

I was just the moderator, mind.